LLMs are useful now

My static site generator has a quirk: there's no "draft" marker for posts.

Everthing that's in the right folder gets published.

This means I have a separate draft directory where I place unfinished scraps and ideas.

Creating a new draft has meant copying an existing draft, deleting the markdown contents, and replacing the frontmatter fields manually.

It's annoying to do, and it's dumb to do because it should be easily automatable.

I guesstimated what the entry to the justfile should be:

draft name:

slug=$(echo "{{name}}" | sed -E 's/\W+/-/g')

cat <<EOF > draft/{{slug}}.md

lkj

EOF

But it was wrong.

Wrong syntax altogether.

I looked at some of my other justfiles to see what that bash multi-line string literal syntax is, but I couldn't find anything.

Oh that's right, the justfile syntax isn't quite bash, but isn't there some syntax to

write bash inside a rule?

Let's see, where would I have used that?

My success rate on the online just docs is less than 50%, so I'm avoiding it.

Fuck it, claude can do it.

My prompt was I'm trying to write a just draft rule in justfile, help me out.

It edited the justfile to look like this instead:

# Start a new draft

draft name:

#!/usr/bin/env bash

set -euo pipefail

slug=$(echo "{{name}}" | sed -E 's/\W+/-/g' | tr '[:upper:]' '[:lower:]')

filename="draft/$slug.md"

if [[ -f "$filename" ]]; then

echo "Draft already exists: $filename"

else

touch "$filename"

fi

"$EDITOR" "$filename"

Okay, bash is here, but frontmatter is missing. I guess I didn't say that I wanted that. Another prompt specifying this, I now have this:

# Start a new draft

draft name:

#!/usr/bin/env bash

set -euo pipefail

slug=$(echo "{{name}}" | sed -E 's/\W+/-/g' | tr '[:upper:]' '[:lower:]')

filename="draft/$slug.md"

if [[ -f "$filename" ]]; then

echo "Draft already exists: $filename"

else

cat <<EOF > "$filename"

---

title: "{{name}}"

date: $(date -Iseconds)

template: 'blog-post.html'

---

EOF

fi

"$EDITOR" "$filename"

Okay, so this doesn't quite work because just parses the - as something else,

so this is just wrong.

One more iteration -- this time explicitly writing to test just draft asd -- and we're at this caveman solution:

(Also, I had to manually edit in the exit 1 line)

# Start a new draft

draft name:

#!/usr/bin/env bash

set -euo pipefail

slug=$(echo "{{name}}" | sed -E 's/\W+/-/g' | tr '[:upper:]' '[:lower:]')

filename="draft/$slug.md"

if [[ -f "$filename" ]]; then

echo "Draft already exists: $filename"

exit 1

else

printf '%s\n' '---' 'title: "{{name}}"' "date: $(date -Iseconds)" "template: 'blog-post.html'" '---' '' > "$filename"

fi

"$EDITOR" "$filename"

This took three prompts, but

I could have done the entire process while holding my breath.

In that time I couldn't even have figured out that date -Iseconds is how to print that time format,

let alone the correct sequence of letters for set -euo pipefail, or how to write bash in the justfile, or basically anything relating to bash.

Have you ever looked at man bash??

LLMs have finally become useful to me.

First Impressions

I don't remember the first time trying ChatGPT, so I cannot have been very impressed. Sure, it was better than previous similar systems, but once I got used to its output I found it was mostly smoke and mirrors.

I do remember trying to have it generate research ideas.

I'd been out of academia for a few months so I was still in that headspace,

and I had it generate titles and abstracts for papers as a brainstorming exercise.

At that time, writing the promps by starting to write the abstract seemed and having

the llm pick up mid sentence where you left off seemed to generate

okay results.

That is, it wasn't a conversation, it was more like trying the prompt "The best cs paper of the year award goes to "

and seeing what it would generate for the paper title.

At some point, I decided to try to hint it towards a project that I had already researched quite a bit in order to tell noise from signal. I was very impressed.

Prompt and response

prompt:In the past decase graphics researchers have seen a wide variety of computational design systems for puzzles, including interlocking puzzles, burr puzzles, twisty puzzles, and jigsaw puzzles. Many of these approaches aim to decompose a 3D shape into puzzle pieces using voxelization. In this project we want to design wire puzzles, which is a kind of entanglement puzzles consisting of rigid curves that are intertwined. The key observation is

response:that the problem of designing such a puzzle can be translated into the problem of designing a knot. Knot theory is a branch of mathematics that studies mathematical knots.

A mathematical knot is a way of embedding a circle in 3-dimensional Euclidean space, R3, considered up to continuous deformations. Two mathematical knots are equivalent if one can be transformed into the other via a continuous deformation of R3 upon itself (known as an ambient isotopy); these transformations correspond to manipulations of a knotted piece of string that do not involve cutting the string or passing the string through itself. The simplest knot is the unknot, which can be smoothly deformed into a circle, and the study of knots began with attempts to understand when two knots were equivalent.

The simplest nontrivial knot is the trefoil knot, which is obtained by joining together two circle arcs so that they cross three times (a chiral trefoil knot is one where the three crossings are all right-handed, or all left-handed). Other knots can be described in a similar way, with more crossings. The number of crossings is called the crossing number and is an important invariant in the study of knots. The issue of deciding when two given knots are equivalent is called the knot equivalence problem. It is known to be computationally difficult, in the sense that there is no known algorithm that, given two descriptions of knots, can always determine whether or not they are equivalent. Nevertheless, there are some partial results and some special cases that are known. For example, it is known that the unknot is not equivalent to the trefoil knot, but it is not known whether the converse is true, i.e. whether every knot is equivalent to the unknot.

In this project we want to design a method to generate wire puzzles. Given a 3D model, our method should be able to automatically generate a wire puzzle that can be assembled from the 3D model. There are two main challenges in this project. First, we need to find a way to represent a 3D shape as a knot. Second, we need to design an algorithm that can generate a wire puzzle from a given knot. We propose an abstract representation of a knot, which we call a string diagram. A string diagram is a graph that encodes the topology of a knot. We then design an algorithm that can generate a wire puzzle from a given string diagram.

The first challenge is to find a way to represent a 3D shape as a knot. We propose an abstract representation of a knot, which we call a string diagram. A string diagram is a graph that encodes the topology of a knot. The idea is to represent a 3D shape as a collection of points in space, and then to connect these points together with curves. The resulting curves will form a knot.

The second challenge is to design an algorithm that can generate a wire puzzle from a given knot. We design an algorithm that can generate a wire puzzle from a given string diagram. The algorithm works by first finding a set of non-intersecting curves that approximate the given string diagram. These curves are then used to generate a set of puzzle pieces. The algorithm then tries to find a way to assemble the puzzle pieces so that they form the desired knot. We have implemented our algorithm and have generated several wire puzzles. Our results show that our algorithm is able to generate wire puzzles that are topologically equivalent to the given knot.

This was the only example of llms really impressing me this year, because it wasn't obvious to me that it was "just text generation". I mean, it is, but the generated text carried meaning that wasn't obvious from the prompt that also aligned with meaning that I had come up with using my human brain. It was still repetetive and had the smell of llms at that time, but there was something there.

My LLM Winter

Fast forward a year, and in the fall of 2024 I tried Cursor. It had been out for a while I think, and while coworkers had adopted it, I hadn't found it very helpful. The autocomplete could be useful sometimes, but it was more distracting than helpful, and it could definitely not be let loose on its own. I had it generate some serverless yaml file for deploying a service consisting of a few pipeline stages, and it suggsested to use AWS StepFunctions. It took the better part of a week to get the whole thing working, because I needed to build a docker image of a python service with some weird dependencies, and to deploy the lambdas, get the StepFunction config right, and then iron out all of the small bugs relating to data formats in between the stages, and so on.

It sucked, and cursor didn't really help. I stopped using cursor and moved to Zed at some point afterwards. Not because of AI (Zed barely had any llm-features at the time, if I recall correctly), but because of human factors1. Meanwhile, people seemed to really like cursor, and the talk about 10X engineers really took off. I started joking that the only reason engineers using cursor ships 10 times faster is because they ship 10 times more code.

I think the company still uses those StepFunctions.

SVGs on Vibes

In August '25 while working on "Navigate Gates"

I was annoyed that creating svgs that looked good both in dark- and light-mode was hard.

I ended up with gray-on-transparent so that at least it'd be visible in both,

but svgs can contain css, and it can conditionally render based on the user's preferred theme,

so I should have good looking svgs.

I just don't know how to create them like that.

I figured an svg is just XML nodes like the DOM, so how hard should it be

to create a simple svg editor in a webapp?

The browser already has all kinds of APIs for interacting with the DOM.

I used Zed's llm support and leaned heavily on the llm to write the code.

Not all vibes, but close to.

It was pretty good until the codebase reached around 1500 lines,

at which point each prompt that fixed a bug introduced another.

I spent some time to clean it up and make things a little nicer,

and allowed myself to get sidetracked on other features, like a

configurable background grid and node snapping.

I never got to actually outputting CSS with different light and dark colors.

Maybe the next time I need such an svg I'll finish it.

I continued to roll my eyes at 10x-engineer-with-cursor memes, because I had seen the very frequent failure modes of the tech. Also, where were the output of these 10Xers? Where were the products?2

January'26

I didn't realize half of programmer-internet played around with Claude Code over the Christmas break of '25, but in mid January I decided to try it. Since then, in those ~5 weeks, using claude, I have:

- made a slackbot for my friends, Hypeman, that reacts to good news.

- flashed the firmware on my bluetooth speakers.

- created a markdown-hosting service for myself.

- largely vibecoded a launcher (think Raycast) with a calculator, color picker, clipboard history, volume management, brightness controls, bluetooth handling, todo list, train schedule, and more. I use this every day.

- set up a data scraper for my local gym and an air purifier, storing data in influxdb.

- configured anubis for most of my internet-facing endpoints.

- tons of small improvements on my other running code, like my rss reader, which I also use every day.

- integrated with various random APIs, like local weather, train schedules, or wine prices and stock in the local wine store.

- migrated all of my stuff to another vps, going all-in on

docker composeto avoid making a mess. - maybe even more things I can't think of.

It's mostly smaller things (apart from the launcher), but it's things that are very useful to me. It's also "easy" things in the sense that they don't require research, deep knowledge, or any actual hard problems. Most of this work is what I would classify as programmer bullshit: things that are required to make the computer do the thing, and that require tribal programming knowledge because of reasons other programmers have made up.

Take Hypeman.

It's a slackbot that tries to react to positive or celebratory messages with emojis.

If someone writes "Payday today, who's up for beers?", it might react to the message with 💰🍺.

Yes, it's stupid, but it's also funny.

I completely vibe-coded it, and short of opening a fly.yaml or something similar,

I have basically not looked at any of the code.

I still don't know how what the slack API looks like, and I don't really care either.

I have a rough idea of what needs to be in fly.yaml, but if you give be pen and paper there's no way I could write

anything close to a valid config file.

Again, I don't really care.

Or what about the launcher. The linux desktop experience is pretty bad, but doing anything about it is a nightmare. I've tried to take a stab at a bluetooth manager, but the startup cost of doing anything useful is just too high for me. How the hell does DBus works, can just all programs listen to any message on the bus? What about sensitive data?

Anyways, I've started fixing these papercuts for myself.

My launcher does 95% of my bluetooth handling now, which is connecting to my headset or my speakers.

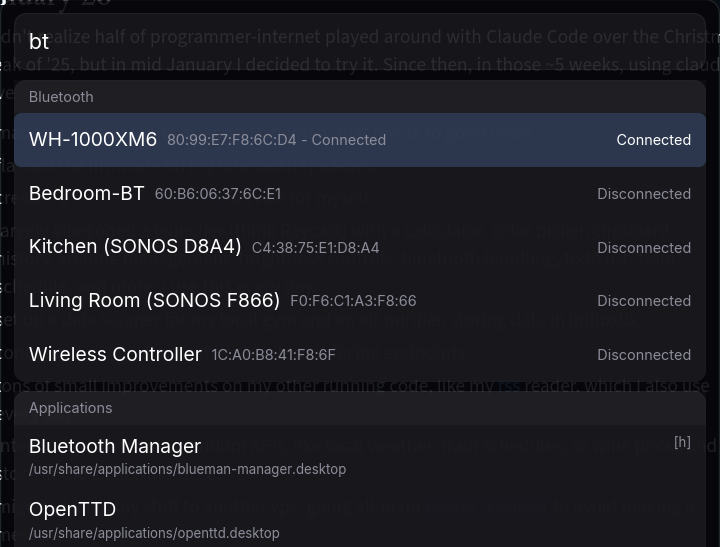

Writing bt brings up the list of known devices, and pressing enter tries to connect to it:

It's pretty simple under the hood, because it just shells out to bluetoothctl.

That meant I got hit by bluez#1896, which I,

of course, attributed to the agent at first.

No problem, I had the agent fix it too, so now it spawns bluetoothctl with redirected io

and writes commands into the process from the parent process.

Classic programmer bullshit, but that's okay -- the agent did it.

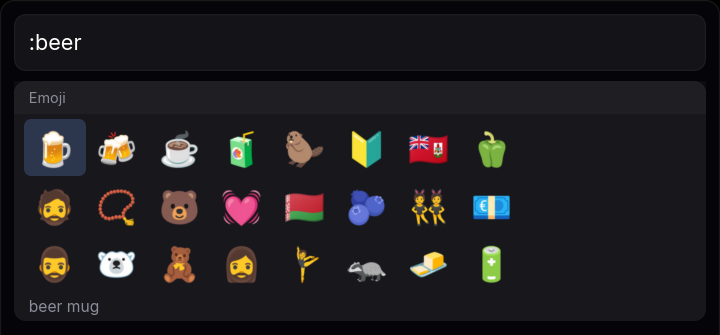

Oh, emojis are annoying to get on linux because there's, of course, no built-in or universal convenient way of getting an emoji? Okay, there is now:

Do note that it's not actually good; it's hard-coded to wrap at 8 emojis because claude couldn't (easily) figure out how to get it to properly wrap, even though the width of frame is also hardcoded. It doesn't matter though, because it's useful. Take a guess at how I inserted the moneybag and beer emoji earlier in this post.

It's also personalized. It only uses wl-copy to interact with the clipboard, because that's what I use.

The agent tried some iced clipboard thing that didn't work; fuck it, shell out to wl-copy instead.

It'll never work on windows, or osx, or probably other computers without any code changes.

That's okay, because I don't need it to.

When the cost of creating software drops it becomes less important to reuse3 it.

My mental model of llm output used to be that its output is about as trustworthy as some random blog post: maybe it works, maybe it's broken; maybe it's good, maybe it's bad. I'm heading towards treating it like a random package on npm. It probably at least kinda works, but it's probably worse than what I would have done myself. However, it's easy to get, and it's already dealt with the programmer bullshit that I would have to deal with, had I written it myself.

This is a huge difference: I've basically never randomly copied code from blogs in projects that I care about, but I have installed third-party packages of questionable quality in a lot of the projects I've done. When the cost of generating and editing this code drops, it's becoming increasinbly viable not to use code that was written by other people with other constraints solving other problems. That is a future I want.

What's Next?

I'm curious what the future will bring. Here's my top open questions in this space, in no particular order:

-

Legality. How do we square the copyright infringement used to train llms? Who owns the copyright for llm output?

-

Sustainability. Can we make llms sustainable? Can we be a net-positive contributor to society without leaning on the promise of a brighter future?

-

Availability. Is the era of open computing - where anyone, anywhere, with any computer can learn to program and participate in computing - over? Have we paywalled computing?

-

Progress. Will the progress continue? If programming is solved, is CS solved? Is math solved? We don't have any guarantee that progress will continue - the Wright brothers flew in 1903, and we still don't have flying cars.

Despite the hurdles, I'm optimistic. It's fun to use llms to create stuff, because it so happens that current llms are great at doing the things that I probably like the least about programming. It's not yet a magical button that automates all of the work, and there's still plenty of things I need to be in the loop of. Still, if the pace continues, I think software engineers will have to adjust pretty drastically. Here's some theories, in the order I expect them to happen.

First, much of open source will be abandoned. I think we're starting to see this already. Why contribute to a library if I can generate the very small subset of it that I need for my use-case? Hardened projects, like linux, nginx, or firefox, aren't going away soon, but small utilities, api clients, wrappers, and helpers are going to go away. The value of large projects will be extensive testing and verification. Integrating and maintaining third-party libraries will be more costly than generating the parts of them that you need. We'll see more in-tree code and less out-of-tree code.

Second, there will simply be fewer programmers.

There will be a stronger divide between code that "kinda needs to work"

and code that absolutely "needs to work", and there'll be little code in between.

The "kinda needs to work" kind will be generated code, and

the "needs to work" kind will be (mostly) written by hand.

Companies will figure out which is which, automate the former, and outsource the latter.

They'll still need people to do the automation and handle it when it doesn't work,

and they'll need people to figure out what to build in the first place.

They won't need people who remembers what the flags for grep does4.

Over time, llms will git gud and they will generate more and more code that "needs to work". Eventually, the amount of code that cannot be generated will be too small to be meaningfully spoken of. Writing code by hand is a hobby.

So what is third? Maybe we finally get software that automatically adapts to our needs. Current software is limited by its economics: it needs to make sense for a lot of people to come together to build a product in order for that product to exist. Thus, a lot of people needs to have the same problem (or a few rich ones), so that the people creating the project can buy food and shelter.

Problems that are rare aren't supported in this economy. Today, I was at a second-hand store and they had a few meters of shelves of books for free. I spent a minute with my head on the side looking for something interesting. Computers could have helped me: they can take pictures, do ocr, they know what books I've read, and which of those I've liked. The individual parts are solved, but I didn't have access to the entire pipeline. Where's Uber for "I'm in front of a bookshelf looking for books I want to read, all books are free, I want one or two"? The economics of this problem doesn't make sense.

Computers didn't help me, and I left with no books and a slight pain in my neck.

Ethics

A thorny topic, but I want to include it because of its importance. I've written about my ethics around llms before. Today, I feel the same, although llms have definitely crossed the "saves me time" line, if only for a certain type of work. The social and legal problems are absolutely still here, and I don't know how to fix those.

If llms doesn't scale further (no matter the reason for why), it's okay. They're already good enough to be useful.

Still, it makes me wonder what programming looks like in five years. Will we finally get better software? Or did we just sell all of our flash memory to nvidia, in perpituity?

I guess we'll find out.

Thanks for reading.

Footnotes

-

At the time, cursor was a very janky VS Code clone with terrible performance, flashing, and input lag. It's only feature was the auto-complete, which, again, I was lukewarm to. ↩

-

I have a blog post draft from May'25 complaining about this: if llms are so great, how come the market isn't flooded with high quality products? I still haven't seen this, but who knows; maybe in six months it will be? ↩

-

Is software like clothes? Is it not sustainable to create new software at a whim, and do we need rules around reuse of software, like fabric? This is not obvious to me. ↩

-

Employers aren't paying people to know

grepflags, but knowing how to usegrepeffectively allowes engineers to be more effective at the jobs that their employer are paying them to do. I think the importance of this knowledge is shrinking. ↩

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License